Augmented Reality: The Definition

Augmented reality (AR) is a live direct or indirect view of a physical, real-world environment whose elements are augmented (or supplemented) by computer-generated sensory input such as sound, video, graphics or GPS data. It is related to a more general concept called mediated reality, in which a view of reality is modified (possibly even diminished rather than augmented) by a computer. As a result, the technology functions by enhancing one’s current perception of reality.

In simple terms augmented reality, or AR for short, enhances your current view of the world. In the space between our current reality where everything is exactly what it appears to be, and virtual where you are immersed into a world that is not your current surroundings, augmented reality exists. This can be achieved by implementing additional items into your field of vision. The first demonstration of AR by Ivan Sutherland featured a 3D cube line drawing that would appear as floating in space in front of the viewer.

The Technology Behind Augmented Reality

The technology behind Augmented Reality has thousands of real world applications for upcoming technologies. A company called Magic Leap, is currently developing a learning tool for classroom use that will allow students to view holographic depictions of their current curriculum. For example, instead of needing to dissect a squid to see how its unique internal structure functions, one could view a holograph with preset layers. Even though this holograph exists virtually. How is this possible? An augmented reality system generates a composite view for the user that is the combination of the real scene viewed by the user and a virtual scene generated by the computer that augments the scene with additional information. The virtual scene generated by the computer is designed to enhance the user’s sensory perception of the virtual world they are seeing or interacting with.

The first real world application of AR was for the US Military. A device called the “heads up display” was implemented into their fighter pilot’s jets. The glass panel was able to project diagrams of their altitude, pitch, yaw, and direction. Giving the pilots freedom from having to keep vigilance over dozens of individual metric devices and gauges on their instrument panel. “Heads up” displays are currently being implemented into consumer automobiles. By using devices such as these the general public can now benefit from the ability to place computer-generated graphics in their field of vision. Allowing them to see things such as navigation, upcoming traffic detours, and local cafe’s to the closest public wi-fi hub. With personal augmented-reality displays, which look similarly to a normal pair of glasses, informative graphics appear in your field of view, and audio coincides with whatever you see. These enhancements are refreshed continually to reflect the movements of your head. These devices, such as Google Glass, are also able to be used in combination with your mobile phone.

Augmented Reality for the Masses

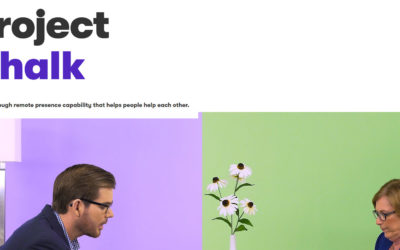

In February 2009, at the TED conference, Pattie Maes and Pranav Mistry presented an augmented-reality system, which they had developed as part of MIT Media Lab’s Fluid Interfaces Group. They called it SixthSense. Using little more than a mirror, a lanyard, a small projector, 4 small colored finger caps, and a smart phone, they created something great. SixthSense is worn around the users neck with a lanyard, the camera on the smart phone “reads” the surroundings, interprets the data, and projects it onto the desired surface via the projector. The small colored finger caps allow the user to manipulate the data received and input their needs into the device For instance. someone using SixthSense could go into a pharmacy to buy toothpaste. the SixthSense would scan the toothpaste, and pull up customer reviews, coupons, and health information.

When you go down the toy aisle in any large retail store, have you noticed what’s in the Lego aisle now? Lego has created what they call the Lego Digital box. When a customer approaches the box holding a Lego product, the camera scans the box, and shows on the screen a fully build model on top of the box the customer is holding. Ikea is currently one of the few other retail pioneers in AR. in their 2013 catalog, customers were provided the opportunity to download a mobile application for free. this application allowed the customers to view the items in the catalog through their smartphones or tablets as miniaturized 3-Dimensional depictions. This allowed consumers to see what the inside of the dressers they were shopping for would look like.

Planning Your Future

Going even further, some interior design mobile applications have surfaced recently that allow the user to plan the inside of their house by the use of augmented reality. Not sure if the couch you want to buy will fit in the spot you want to put it? Easy, open the app, point the camera where you would place the couch, and the screen will depict what the space would look like with the couch. Why stop with couches though? Why not go bigger? Construction workers can now put on AR glasses and see the blueprints for the project they are doing, without leaving where they are at. Construction foreman can see layered 360 pictures of their current construction projects at different points in time. With the new technologies coming into play, there are thousands of options for the use of AR in nearly any job market. From interior design, food service, transportation, to construction. AR will soon be everywhere. The technology is here, the time is now.